What is Account Opening Fraud?

Account opening fraud occurs when a malicious actor opens a new consumer or business account (using

stolen, synthetic, or blended identity information) to obtain credit, payment facilities, promo codes, or to quickly

cash out. No takeover required. This bad actor comes in “cold” passes the surface checks, ramps up quickly to build

just enough trust, then extracts funds and disappears. The playbook works on banks, neobanks, lenders, wallets, gaming,

and marketplaces.

How does it present itself? Clean docs, realistic selfies, a brand-new device, and then follow up quickly with

high-velocity limit increase requests, gift-card purchases or crypto buys, payouts or refunds to high-risk

instruments, and an uneventful departure. Slow-and-steady fraud has a similar playbook, but with lower initial

purchases, good on-time behavior, a limit increase, and then a cash-out binge and default (aka bust-out). For

business use cases: shell companies, straw directors, recycled addresses, and recycled devices across

“different” companies.

Why does it bypass controls? Because the identity “checks out” as “valid,” but it isn’t the real person holding

the phone. Synthetic and blended identities easily evade simple matching rules. A single control in isolation

will not reveal intent. You need to correlate across people, devices, networks, and time.

Tell-tale signals:

-

Short tenure + high velocity immediately following onboarding; payout or refund requests within hours of first

sign up.

- Device or IP overlap across “different” applicants; shared addresses or phone number ranges.

-

Document crops that look too clean, selfie artifacts, rigid eye gaze, uniform lighting across many document

submissions.

-

Thin or inconsistent history: claimed income inconsistent with early spending levels; mismatched geography

between claimed and actual.

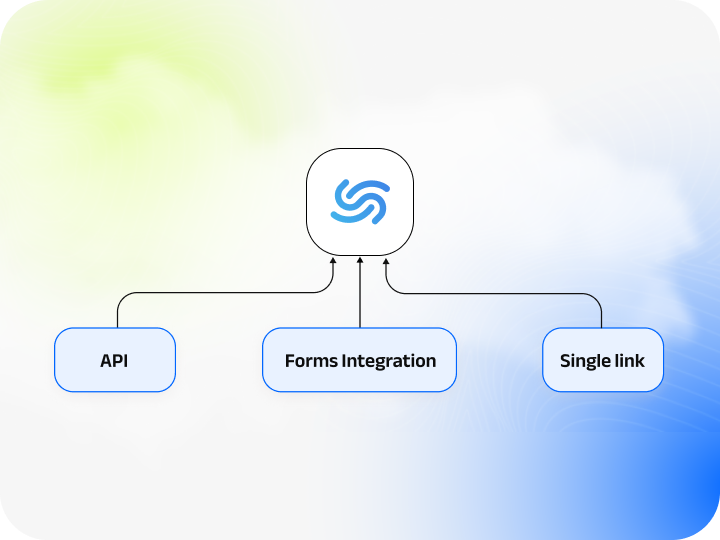

Controls that work: anchor the person first with rigorous

identity verification

— document authenticity and tamper checks, selfie-to-ID biometric matching, and liveness checks to prevent spoofing.

Pair that with device fingerprinting, velocity caps, deduplication rules across identity fields, graphing for shared

artifacts, and step-up verification on risky events (limit increase requests, payout destination changes, credential

edits). Design the user onboarding journey to segment risk early

(progressive limits for new accounts with no history, stricter controls for high-exposure products and flows, evidence

packs prepared in advance for disputing transactions). Then close the loop: every confirmed fraud should feed back

into rules and models, so signals for tomorrow fire a little sooner.

Key takeaways: new accounts aren’t “low risk” by default. Trust must be earned—methodically, from layered

signals, and with a policy that’s unapologetically firm.